Some days, I wonder if I live in a parallel world.

I want more efficient software (to lower overall power usage of our society, to avoid throwing away hardware after a couple of years, to be able to do more with less).

I fight centralisation of data/knowledge/power in IT (promote open protocols, selfhosting, open source, decentralisation)

I do want a more egalitarian society (no more barriers because of handicaps or upbringing in a non-privileged environment. Improving our democracy with services that help everyone by reducing/eliminating bureaucracy).

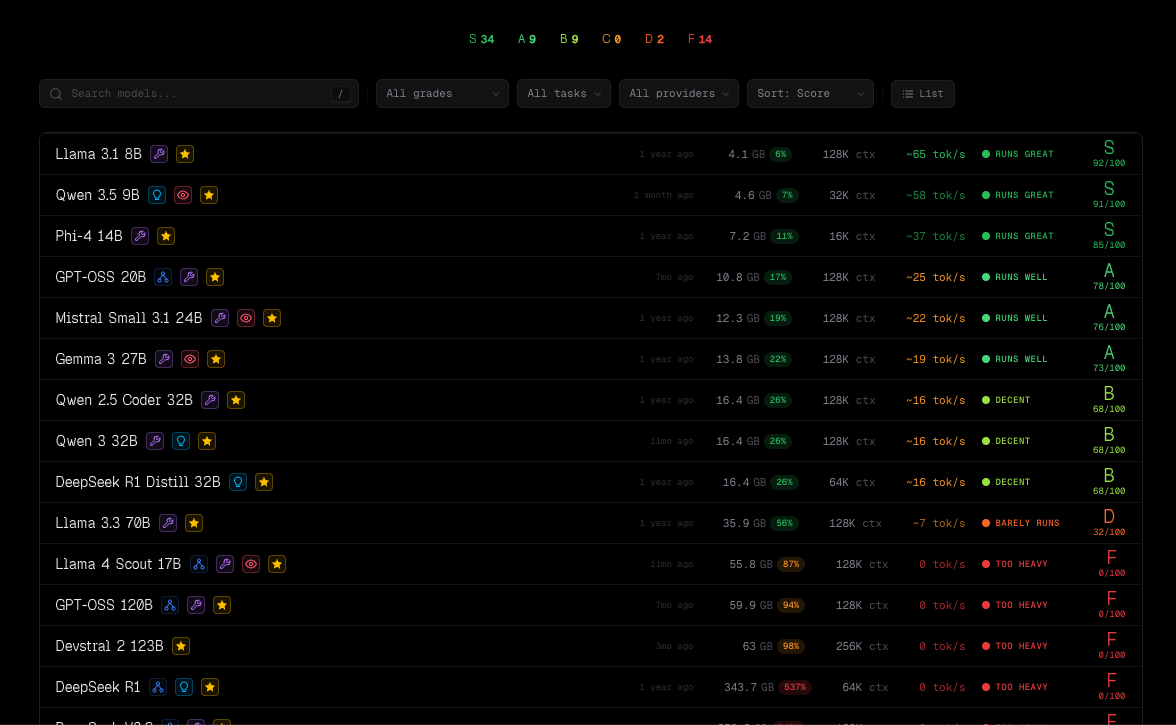

I do not want to see our world burn (see point above about reducing waste. But also promoting local LLM usage, and not defaulting to wasteful services for tasks that can be done locally).

Yet... I don't fight genAI. On the contrary, I deeply believe it can help us achieve the above. Faster.

The problem is way too many people are assuming that because (a lot of) people misuse it, the technology must be the issue.

Maybe focus on the people misusing it, and not the technology ? Banning usage of genAI altogether in software projects is, IMHO, both counter-productive and impossible.

Are we going to also ban people using LSP ? Linters ? Fuzzy search tools ? Spell-checks ? Translation tools ? Speech-To-Text assistants ?

Heck, how will *you* know if I used a LLM to assist me ? Because of the quality of the contribution I provided ? Because I'm not knowledgeable about your project and design ? Because english is not my native language, and I used a tool for translating text ?

Or maybe it's because, shocking, I used it as yet-another-tool. And it didn't replace my brain. I still want to ensure what I'm delivering is correct, useful and maintainable. It doesn't replace all the brainstorming, investigation, analysis, tests, that I do. But helps me iterate on all of those faster.

What is a PITA is random contributors dumping some stuff which they didn't properly review/test. The vibecoders. But how is that different from random "code dumps" of people who did a "wrong" fix ? Lack of education.

Instead of banning genAI altogether, maybe specify what is expected from the human using it. I.e. that person must "own" what it produced, know exactly what it contains, why it does it that way, etc...

BTW, Jellyfin has a precent decent and comprehensive set of rules which are a good middle ground, go read it :

https://jellyfin.org/docs/general/contributing/llm-policies/#rant #LLM #genAI #software

🙀🚂🐧

🙀🚂🐧