I think this needs to be repeated, since I tend to be quite negative about all of the 'AI' hype:

I am not opposed to machine learning. I used machine learning in my PhD and it was great. I built a system for predicting the next elements you'd want to fetch from disk or a remote server that didn't require knowledge of the algorithm that you were using for traversal and would learn patterns. This performed as well as a prefetcher that did have detailed knowledge of the algorithm that defined the access path. Modern branch predictors use neural networks. Machine learning is amazing if:

- The problem is too hard to write a rule-based system for or the requirements change sufficiently quickly that it isn't worth writing such a thing and,

- The value of a correct answer is much higher than the cost of an incorrect answer.

The second of these is really important. Most machine-learning systems will have errors (the exceptions are those where ML is really used for compression[1]). For prefetching, branch prediction, and so on, the cost of a wrong answer is very low, you just do a small amount of wasted work, but the benefit of a correct answer is huge: you don't sit idle for a long period. These are basically perfect use cases.

Similarly, face detection in a camera is great. If you can find faces and adjust the focal depth automatically to keep them in focus, you improve photos, and if you do it wrong then the person can tap on the bit of the photo they want to be in focus to adjust it, so even if you're right only 50% of the time, you're better than the baseline of right 0% of the time.

In some cases, you can bias the results. Maybe a false positive is very bad, but a false negative is fine. Spam filters (which have used machine learning for decades) fit here. Marking a real message as spam can be problematic because the recipient may miss something important, letting the occasional spam message through wastes a few seconds. Blocking a hundred spam messages a day is a huge productivity win. You can tune the probabilities to hit this kind of threshold. And you can't easily write a rule-based algorithm for spotting spam because spammers will adapt their behaviour.

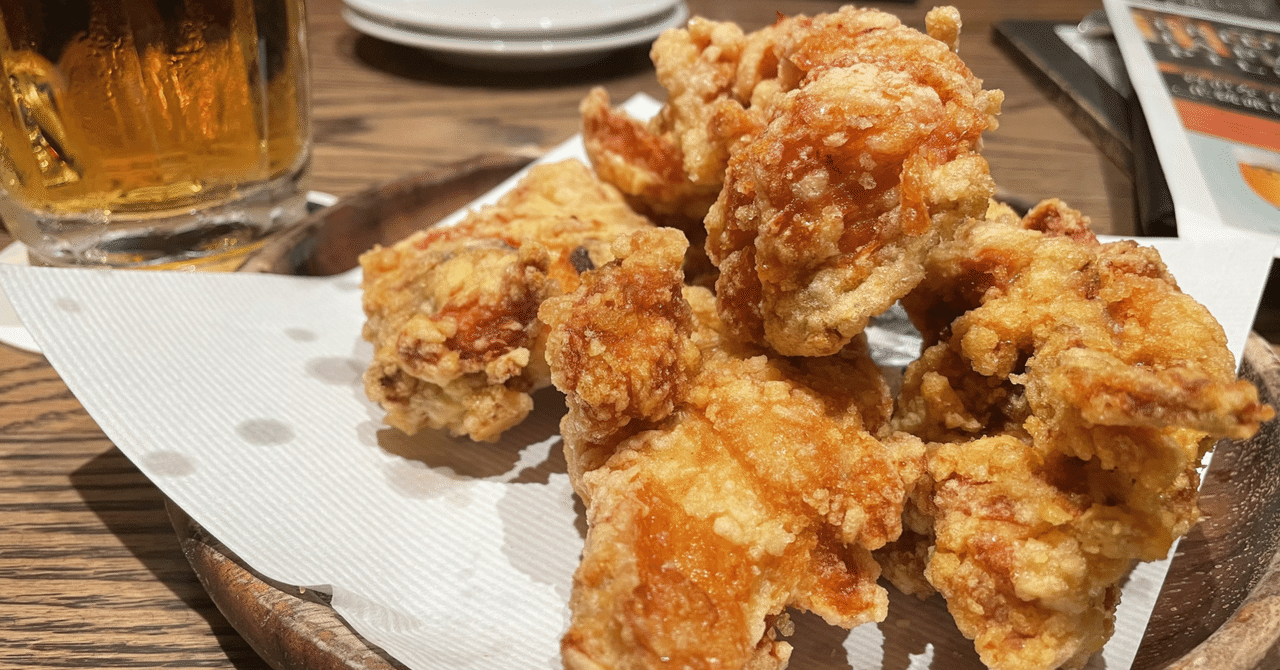

Translating a menu is probably fine, the worst that can happen is that you get to eat something unexpected. Unless you have a specific food allergy, in which case you might die from a translation error.

And that's where I start to get really annoyed by a lot of the LLM hype. It's pushing machine-learning approaches into places where there are significant harms for sometimes giving the wrong answer. And it's doing so while trying to outsource the liability to the customers who are using these machines in ways in which they are advertised as working. It's great for translation! Unless a mistranslated word could kill a business deal or start a war. It's great for summarisation! Unless missing a key point could cost you a load of money. It's great for writing code! Unless a security vulnerability would cost you lost revenue or a copyright infringement lawsuit from having accidentally put something from the training set directly in your codebase in contravention of its license would kill your business. And so on. Lots of risks that are outsourced and liabilities that are passed directly to the user.

And that's ignoring all of the societal harms.

[1] My favourite of these is actually very old. The hyphenation algorithm in TeX trains short Markov chains on a corpus of words with ground truth for correct hyphenation. The result is a Markov chain that is correct on most words in the corpus and is much smaller than the corpus. The next step uses it to predict the correct breaking points in all of the words in the corpus and records the outliers. This gives you a generic algorithm that works across a load of languages and is guaranteed to be correct for all words in the training corpus and is mostly correct for others. English and American have completely different hyphenation rules for mostly the same set of words, and both end up with around 70 outliers that need to be in the special-case list in this approach. Writing a rule-based system for American is moderately easy, but for English is very hard. American breaks on syllable boundaries, which are fairly well defined, but English breaks on root words and some of those depend on which language we stole the word from.

夕凪兎

夕凪兎

DevelopersIO

DevelopersIO

わしゃ

わしゃ

4/12

4/12  開始!!

開始!!  呑んだ

呑んだ